SSD-LeNet based method of mine moving target detection and recognition

-

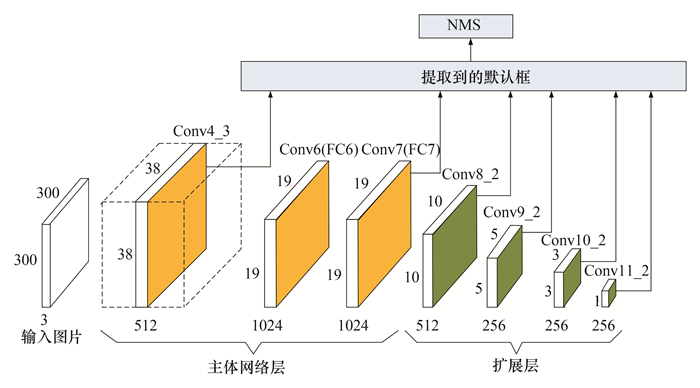

摘要: 针对井下雾尘、低照度环境中矿井移动目标检测与识别存在检测精度低、实时性差等问题,提出了一种基于SSD-LeNet的矿井移动目标检测与识别方法。利用视觉传感器捕获矿井移动目标原始图像的一帧来构建模型输入,据此制作含有数字序列位置信息的数据集;离线训练的单镜头多盒检测器(Single Shot multibox Detector,SSD)模型可以输出与自身位置对应的目标特征类别,并利用该训练好的SSD学习模型对测试集中移动目标图片上的数字序列位置进行检测;根据数字序列位置对应的矩形区域进行字符分割操作,将分割后的单个字符依次放入LeNet网络中进行特征识别;识别出的单个字符按顺序合成数字序列快速检索出移动目标的身份信息。研究表明,本文方法与其他深度学习目标检测与识别方法相比,对矿井低照度及噪声环境下的目标检测与识别具有较高的准确率和较强鲁棒性,能够满足实时性要求。Abstract: Aiming at the problems of low detection accuracy and low real-time performance in the detection and recognition of mine moving targets in foggy and low-illumination environment, a method based on deep convolutional neural network for intelligent detection and recognition of mine moving targets is proposed. The visual sensor is used to capture a frame of the underground mine scene to construct the environment model. The original image of the moving target is used as the model input, and the digital identifier is embedded in the specific position of the moving target image, which creates a data set containing the digital sequence position information. A novel off-line training model named single shot multibox detector(SSD)is presented, which can output target feature categories corresponding to its position. Then, the trained SSD learning model is used to detect the position of the digital sequence on the moving target image in the test set, and characters are split according to the rectangular region corresponding to the position of the digital sequence. Furthermore, the segmented single characters are put into the LeNet-5 network for sequential recognition The recognized single characters are sequentially combined into a digital sequence, thereby quickly retrieving the identity information of the mobile target. The research shows that compared with other target detection and recognition methods, the proposed method has higher accuracy and robustness for target detection and recognition under low-illumination and noisy environment, and can meet real-time requirements.

-

表 1 几种方法在不同噪声条件下的检测准确率和实时性比较

Table 1. Comparison of real-time and precision of the algorithm in different image noise

噪声 方法 实时性/(帧·s-1) 检测精度/% 数字标签 人员 平均 理想条件 Fast R-CNN 0.5 88.1 88.3 88.2 Faster R-CNN 7.0 90.2 90.6 90.4 YOLO 21.0 83.0 83.5 83.2 SSD300 32.5 92.8 95.8 94.3 高斯噪声 Fast R-CNN 0.5 88.0 88.3 88.1 Faster R-CNN 7.0 90.0 90.4 90.2 YOLO 21.0 82.7 83.3 83.0 SSD300 32.0 92.5 95.6 94.1 椒盐噪声 Fast R-CNN 0.5 88.1 88.1 88.2 Faster R-CNN 8.0 89.8 90.3 90.1 YOLO 21.0 82.6 82.9 82.7 SSD300 32.0 92.4 95.6 94.0 -

[1] 袁亮. 煤炭精准开采科学构想[J]. 煤炭学报, 2017, 42(1): 1-7. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201701001.htmYuan Liang. Scientific conception of precision coal mining[J]. Journal of China Coal Society, 2017, 42(1): 1-7. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201701001.htm [2] 孙继平. 煤矿安全生产监控与通信技术[J]. 煤炭学报, 2010, 35(11): 1925-1929. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201011034.htmSun Jiping. Technologies of monitoring and communication in the coal mine[J]. Journal of China Coal Society, 2010, 35(11): 1925-1929. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201011034.htm [3] 张帆, 管增伦. 矿井盲区环境移动通信系统研究与设计[J]. 矿业科学学报, 2016, 1(2): 83-89. http://www.cnki.com.cn/Article/CJFDTotal-KYKX201602011.htmZhang Fan, Guan Zenglun. A novel mine mobile communication system of blackout environment in the coal mine[J]. Journal of Mining Science and Technology, 2016, 1(2): 83-89. http://www.cnki.com.cn/Article/CJFDTotal-KYKX201602011.htm [4] 张帆, 闫秀秀, 李亚杰. 基于稀疏度自适应的矿井智能监控图像重构方法[J]. 煤炭学报, 2017, 42(5): 1346-1354. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201705035.htmZhang Fan, Yan Xiuxiu, Li Yajie. A novel image reconstruction method of mine intelligent surveillance based on adaptive sparse representation[J]. Journal of China Coal Society, 2017, 42(5): 1346-1354. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201705035.htm [5] 智宁, 毛善君, 李梅. 基于照度调整的矿井非均匀照度视频图像增强算法[J]. 煤炭学报, 2017, 42(8): 2190-2197. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201708034.htmZhi Ning, Mao Shanjun, Li Mei. Enhancement algorithm based on illumination adjustment for non-uniform illuminance video images in coal mine[J]. Journal of China Coal Society, 2017, 42(8): 2190-2197. https://www.cnki.com.cn/Article/CJFDTOTAL-MTXB201708034.htm [6] 张帆, 李亚杰, 孙晓辉. 无线感知与视觉融合的井下目标跟踪定位方法[J]. 矿业科学学报, 2018, 3(5): 484-491. http://www.cnki.com.cn/Article/CJFDTotal-KYKX201805009.htmZhang fan, Li Yajie, Sun Xiaohui. A novel method of mine target tracking and location based on wireless sensor and visual recognition[J]. Journal of Mining Science and Technology, 2018, 3(5): 484-491. http://www.cnki.com.cn/Article/CJFDTotal-KYKX201805009.htm [7] 黄凯奇, 陈晓棠, 康运锋, 等. 智能视频监控技术综述[J]. 计算机学报, 2015, 38(6): 1093-1118. https://www.cnki.com.cn/Article/CJFDTOTAL-JSJX201506001.htmHuang Kaiqi, Chen Xiaotang, Kang Yunfeng, et al. Intelligent visual surveillance: a review[J]. Chinese Journal of Computers, 2015, 38(6): 1093-1118. https://www.cnki.com.cn/Article/CJFDTOTAL-JSJX201506001.htm [8] Simonyan K, Zisserman A.Very deep convolutional networks for large-scale image recognition[C]// 3rd International Conference on Learning Representations.San Diego: ICLR, 2015. [9] Zhou B L, Khosla A, Lapedriza A, et al. Object detectors emerge in deep scene cnns[C]// 3rd International Conference on Learning Representations.San Diego: ICLR, 2015. [10] Ren S, He K, Girshick R, et al. Object detection networks on convolutional feature maps[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(7): 1476-1481. doi: 10.1109/TPAMI.2016.2601099 [11] Erhan D, Szegedy C, Toshev A, et al. Scalable object detection using deep neural networks[C]// IEEE Conference on Computer Vision and Pattern Recognition. Columbus: IEEE, 2014: 2155-2162. [12] Johnson J, Karpathy A, Feifei L.Densecap: Fully convolutional localization networks for dense captioning[C]// IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas: IEEE, 2016: 4565-4574. [13] Li J, Liang X, Shen S M, et al. Scale-aware fast R-CNN for pedestrian detection[J]. IEEE Transactions on Multimedia, 2018, 20(4): 985-996. http://ieeexplore.ieee.org/document/8060595/ [14] Sermanet P, Eigen D, Zhang X, et al. OverFeat: integrated recognition, localization and detection using convolutional networks[C]// 2nd International Conference on Learning Representations.Banff: ICLR, 2014. [15] Girshick R, Donahue J, Darrell T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]// IEEE Conference on Computer Vision and PatternRecognition.Columbus: IEEE, 2014: 580-587. [16] Uijlings J R R, Sande K, Gevers T, et al. Selective search for object recognition[J]. International Journal of Computer Vision, 2013, 104(2): 154-171. doi: 10.1007/s11263-013-0620-5 [17] He K, Zhang X, Ren S, et al. Spatial pyramid pooling in deep convolutional networks for visual recognition[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2015, 37(9): 1904-1916. doi: 10.1109/TPAMI.2015.2389824 [18] Girshick R.Fast R-CNN[C]// IEEE International Conference on Computer Vision.Santiago: IEEE, 2015: 1440-1448. [19] Ren S Q, He K M, Girshick, et al. Faster R-CNN: towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(6): 1137-1149. doi: 10.1109/TPAMI.2016.2577031 [20] Redmon J, Divvala S, Girshick R, et al. You only look once: Unified, real-time object detection[C]// IEEE Conference on Computer Vision and Pattern Recognition.LasVegas: IEEE, 2016: 779-788. [21] Liu W, Anguelov D, Erhan D, et al. SSD: single shot multibox detector[C]// 14th European Conference on Computer Vision.Amsterdam: Springer, 2016: 21-37. [22] Kordelas G A, Alexiadis D S, Daras P, et al. Enhanced disparity estimation in stereo images[J]. Image and Vision Computing, 2015, 35: 31-49. doi: 10.1016/j.imavis.2014.12.001 -

下载:

下载: